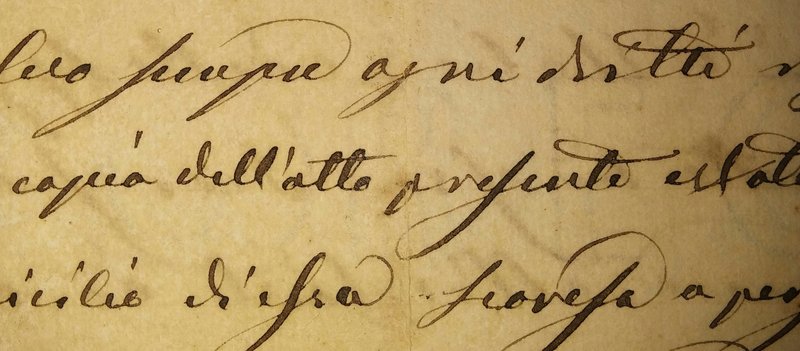

At the crossroads of computer vision and automatic natural language processing, Automatic Text Recognition (ATR) has received significant attention due to its practical applications in digitising historical documents, aiding data entry and enabling natural human-computer interaction. As researchers strive to improve ATR systems, an often overlooked but crucial aspect is the reproducibility of research results. In this blog post, we will explore the importance of reproducibility in ATR research and how it contributes to the advancement of the field.

What is reproducibility in research?

Reproducibility in research refers to the ability of other researchers to replicate or reproduce the same results as a particular study when given access to the same data, code and experimental conditions. It is a cornerstone of scientific research, enabling the validation of findings and providing a solid foundation for future developments. Reproducibility, which guarantees the veracity of discoveries and technological progress, is a fundamental criterion of scientific research.

The challenges of ATR research

HTR is a complex and multidisciplinary field that combines elements of computer vision, deep learning and natural language processing. Researchers often face numerous challenges when developing HTR systems, including variability in writing styles, different writing tools, and variations in the content, format, and quality of scanned documents. Given these challenges, reproducibility becomes even more critical in ATR research.

Why reproducibility is important in ATR

- Validation of research results: Reproducibility ensures that reported results are not the result of chance or specific to a particular dataset. When ATR research is reproducible, it strengthens the credibility of the findings, allowing the wider scientific community to trust and build on them.

- Benchmarking and comparison: In ATR, where multiple algorithms and models are proposed, reproducibility enables fair benchmarking and comparison between different approaches. This ensures that the best performing methods are accurately identified and that the field can progress efficiently.

- Identifying weaknesses: Reproducibility helps to identify weaknesses and limitations of existing ATR methods. When other researchers attempt to reproduce results, they may uncover flaws or potential areas for improvement, ultimately driving innovation in the field.

Steps Toward Ensuring Reproducibility

The following elements are essential for a scientific community to offer guarantees of reproducible research:

Open access to data:

Researchers should provide open access to the datasets used in their experiments. Sharing these datasets allows others to verify the results, conduct further investigations, and participate in open competitions and benchmarks aimed at pushing the boundaries of ATR research. The ATR community has a long history of publishing open datasets, and access to datasets is no longer an issue.

See:

- TC11 Online datasets

- HTR-United

- Handwritten datasets on Zenodo

- Ready-to-use datasets on HuggingFace prepared by TEKLIA

Code and models sharing:

Making the source code for ATR algorithms and models available to the community allows transparency and encourages collaboration. It also facilitates the reproduction of results and allows others to contribute to benchmarking efforts.

See:

- HTR on github

- PyLaia, the open-source HTR library maintained by TEKLIA

- HTR Kraken models on Zenodo

- TEKLIA open-source models on HuggingFace

Clear documentation:

At TEKLIA, we take code quality and documentation very seriously. Detailed documentation of experimental setups, data pre-processing and model configurations is essential. This ensures that others can accurately replicate the experiments and participate in open benchmarking initiatives with a clear understanding of the methodology.

Standard evaluation metrics:

Establishing standard evaluation metrics and protocols in ATR research helps to create a level playing field for comparing different methods, making it easier for researchers to participate in open competitions and benchmarks and to evaluate their work in a standardised context.

See:

- JiWER is a simple and fast python package to evaluate an automatic speech recognition system (also works for ATR)

- Nerval, a python package for NER evaluation on noisy text

Conclusion

In the dynamic and rapidly evolving field of handwriting recognition, reproducibility is not a mere academic formality; it is the cornerstone of progress. By prioritising reproducibility, researchers contribute to the credibility, transparency and progress of ATR research. TEKLIA will continue to be as involved as possible in the open source publication of data, source code, models and metrics to promote the progress of the technology and its dissemination to as many people as possible.

Credits:

- Photo by Alessio Fiorentino on Unsplash